2026-04-15 LLMs are keeping you from learning

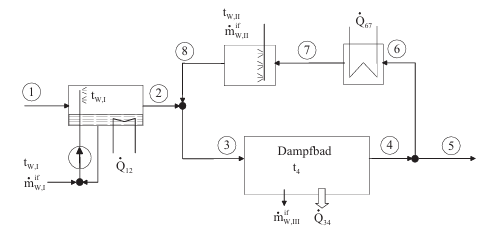

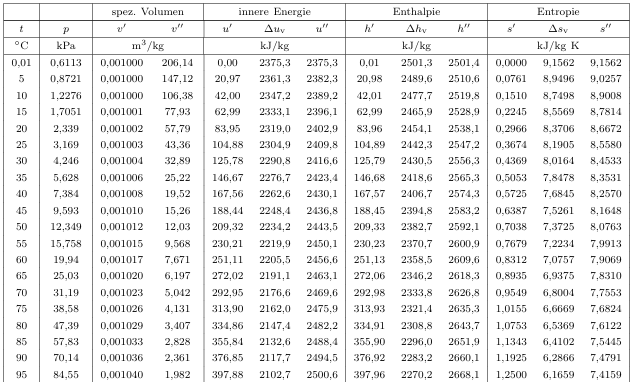

When I was in university for my Bachelors in Mechanical Engineering, I had to attend "Thermodynamics". This was (and probably still is) widely considered to be one of the hardest courses of the whole degree. In much of the coursework we were given some sort of physical process and had to calculate energy and entropy for it using formulae, tables and graphs. As an example, depicted here is the AC for a steam bath and the temperature table for water vapor:

When working on these assignments at home, I would often fall into the same pattern:

- Be very motivated and start a new exercise

- Solve a few parts of it, but eventually get stuck

- Become frustrated, look up the solution and go "oh, yeah sure, that is obvious"

- Start a very similar exercise, but fail in the same way

Eventually I noticed that just by reading the solution, it would not get engraved into my brain and I wasn't able to apply it on my own.

The problems itself were fairly small. Usually only a handful of correct steps were required to solve them, but in each step there would be dozens of different approaches with only one (or sometimes a couple) leading to the correct solution. The others would lead to dead-ends, clearly wrong results or (in the worst case) solutions that looked right on first glance but were in fact not.

I like to think of this as a tree where each arrow is some work you need to do and each branch is a decision you need to make. Some paths lead into the completely wrong direction, some come really close to the correct solution, only to miss it by some margin. Usually only one right series of choices will lead to the correct solution.

Just looking up the solution and not working on the problem gives you this "golden path", but it presents it to you like this:

It doesn't show all the branches, it doesn't show all the decisions you needed to make. It looks trivial. So, the next time you are faced with the same problem, you might know what the end result looks like, but you still don't *really* know how to get there.LLMs work in the same way. You input the problem and they come up with a solution. You don't really learn anything and you do not get the tools to apply your new "knowledge" to other things.

Why does this matter?

You could argue this does not matter, because today getting the end result is the only important part. Feast on it and move on. In uni we called this "Bulimie Lernen" / "bulimia-style learning", aka just gulp down on all the material, throw it up for the exam, then forget about it as soon as possible.

I would argue, knowing why something works and how to get there is still very important.

In my coursework I noticed you can get really close to the correct solution with a completely wrong approach or that even tiny errors can compound and lead you astray.

When coding or developing a product this happened to me a few times as well. The obvious danger is, when building something that can harm people. Converting to the wrong data type in spacecraft software or miscalculating the stress on a bridge, can lead to catastrophic consequences. Being able to scrutinize results, distinguish between "actually correct" and "looks about right" and understanding how pieces interact is key.

Even in minor scenarios not being able to do this is a bad thing. Often times the software or product you create is only a stepping stone for a bigger solution. Features inter-connect and form an architecture. Your "looks right" solution can quickly turn into a mess when you notice the performance is not there for the new features you want to add or interfaces do not line up.

This often creates more work in the long run, because nothing you do now will lead you back to the golden path, but you cannot easily go back and change decisions made much earlier - they are part of the architecture now.

One could still argue in the future this does not matter, because we will just prompt LLMs and other AI tools again and they fix the mess for us.

Well, maybe - right now certainly not.

And even if that is true, we all experienced how you can run around in circles with an AI. Being able to lay a path for it by inputting very specific ideas or explicitly steering it away from danger can save you a lot of time and money in tokens.

By knowing how something is built and understanding the decisions that lead to it, you can also inspect and judge results from others more diligently. You build up an intuition about your field, your work and the problems you usually face. You get to apply that knowledge to problems outside of your scope and more easily tackle new and bigger problems in the future, because you don't start from scratch every time.

What can you do about it?

Not using LLMs and AI tools in one way, but even I would argue that is probably not the best approach.

For one, when learning or approaching a new problem, try to get as far on your own as you can. Only use LLMs as a last resort and try to prompt something like "I am working on XYZ and am stuck on ABC. Can you give me a small tip on how to proceed, but not the full solution". Instead of handing the controller to your older brother, try to gather tips and accept to fail a few times, before you eventually beat the next boss fight.

When you think you finally understood something, try explaining it to other people. You will quickly find out, if you actually do. (The Feynman Technique)

When working with ready-made solutions always scrutinize them. Try to really understand each step taken and ask yourself "would I have done it the same way?" or "could it have been solved in a different way? why would that not work?".

The YouTuber 3Blue1Brown does this in almost every one of this videos. He pauses at a crucial moment and asks the viewer to come up with the solution. Do that. Pause. Take your time. Break out of the content machine that constantly feed you dopamine. It will deepen your understanding and help you in the long run.

In my experience not knowing how to solve things and just relying on external help leads to a lot of frustration. Whereas having an understanding of the things you care about helps you every day. It will often spill over into unexpected fields outside, too, because everything is connected in some way (gamification is everywhere, biology inspires mechanics, the same theory applies for bottlenecks in production as for bottlenecks in software, etc.).

I am hopeful, people realize learning and deep understanding nowadays is as valuable as it was in the past. LLMs are an incredible tool, but just a tool nonetheless. We need people that know their craft to build a better future together.